Milan, 2018, backstage at a fashion show. Two top stylists are arguing heatedly over a model's skin tone. One insists it's a typical "Cold Winter," the other swears it's "Pure Spring." Who was right? Both. And simultaneously, neither. The problem wasn't their expertise, but the physics of light. The yellow incandescent bulbs by the dressing mirror and the cold blue daylight from the window created an optical illusion that negated years of professional expertise.

That's when I first began to wonder: if even world-renowned experts are victims of media coverage, how can an ordinary woman trust a subjective opinion? Spoiler: mathematics handles this better. Determine color type from a photo using a neural network can be done with a precision beyond the human eye. We discussed the evolution of this method in more detail in our The Complete Guide to Digital Appearance Analysis , and in this article we'll break down the anatomy of the process down to the pixel.

Color Anatomy and Metamerism: Why Professionals Get It Wrong

Most people believe that stylists possess some kind of "magical intuition" for color that machines lack. In reality, human vision is deeply vulnerable. Josef Albers's seminal work, "The Interaction of Color" (1963), demonstrated that we are physically incapable of perceiving color in isolation. Our brain always evaluates hue relative to adjacent colors.

But the stylist's main enemy is metamerism. This is a physical phenomenon where the same object appears completely different when the light source changes. Fabrics absorb and reflect light waves differently depending on the temperature (in Kelvin) of the surrounding light.

I had a classic case. A client bought a stunning emerald-colored silk blouse for €180 at a boutique with warm halogen lighting. She looked fresh and rested. The next day, she wore it to an office with typical fluorescent lighting. The luxurious emerald turned a sickly swampy color, and her complexion acquired an earthy undertone.

"The human eye can distinguish about 10 million shades, but retinal fatigue, personal preferences, and even the stylist's mood inevitably distort the final verdict."

This is why the traditional method of draping (placing colored cloths on the face) fails in 30% of cases if not performed in an ideal laboratory environment.

How the algorithm works: a neural network can determine a person's color type from a photo more accurately than the human eye.

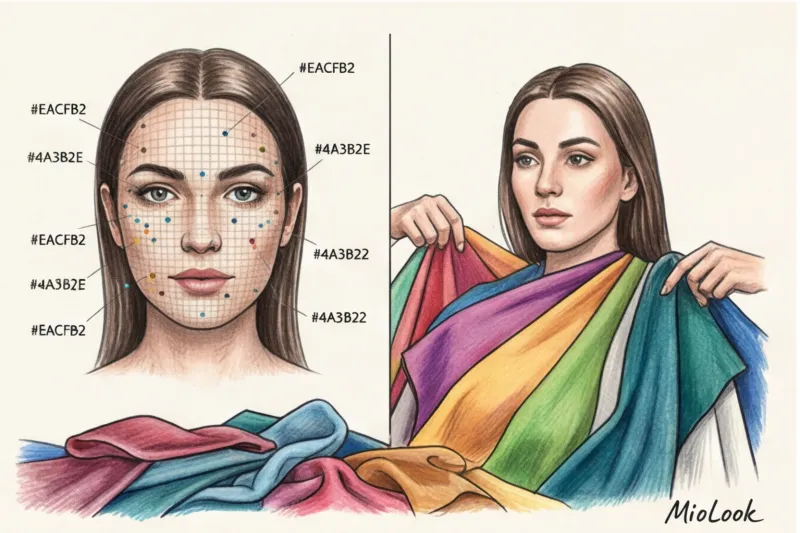

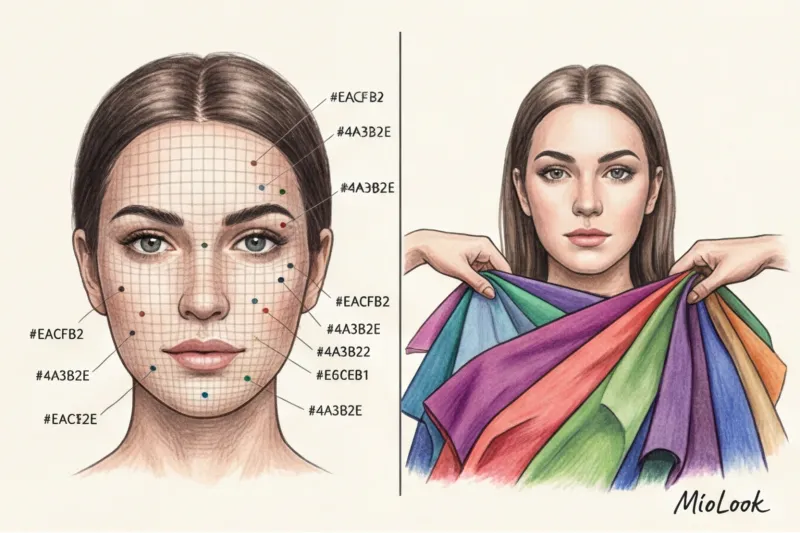

While the stylist evaluates the overall picture, the algorithm relies on the colorimetric standards of the International Commission on Illumination (CIE). The neural network doesn't look at your face in the traditional sense—it breaks it down into hundreds of control points.

Artificial intelligence extracts pure RGB and HEX values from specific pixels in your skin, hair roots, and iris. This allows the machine to mathematically calculate the contrast level of your appearance. For example, the algorithm calculates the difference between your skin and hair as an 8:1 ratio. These numbers are undeniable—they're objective.

Machine vision's advantage is particularly evident when determining skin temperature. Stylists often confuse cool olive undertones with warm yellow ones, because they visually appear "golden." A neural network, by calculating the proportions of blue and red pixels in an image's structure, will never make such a mistake.

Your perfect look starts here

Join thousands of users who look flawless every day with the MioLook AI stylist.

Start for freePixels Don't Lie: Eliminating Cognitive Biases

Artificial intelligence doesn't have a favorite color. It won't "pull" you up to the Fall color scheme simply because terracotta is ruling the runways this season and the stylist is dying to try it on you.

AI also excels at managing age-related changes. When gray hair appears or skin pigmentation changes, your skin tone can shift smoothly (for example, from a high-contrast Winter to a softer Summer). The machine evaluates your data. here and now , while a person often clings to the “old” image of the client.

The Achilles' Heel of Machine Vision: When AI Makes a Mistake

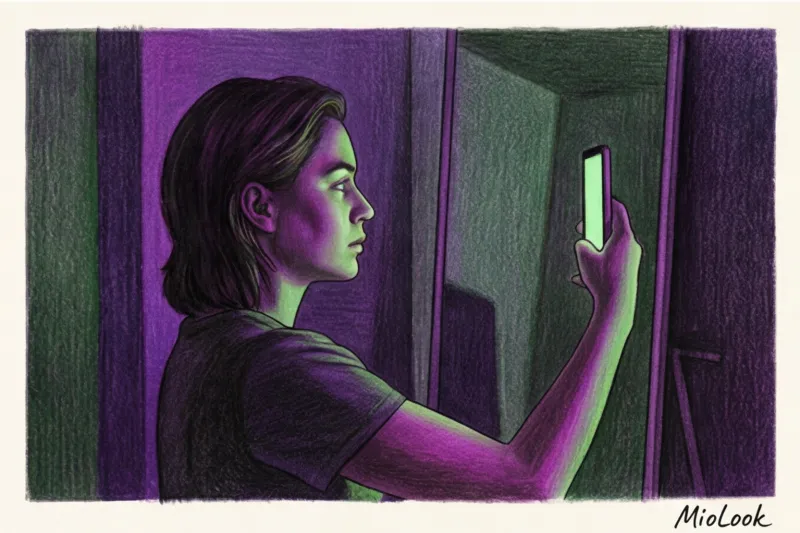

It would be unfair to say that neural networks are flawless. They have one weakness, which is described by the ironclad rule of programmers: Garbage In, Garbage Out (Garbage in, garbage out.) The algorithm depends entirely on the quality of the original photo.

The biggest saboteur of digital analysis is the automatic white balance (AWB) in your smartphone camera. In an attempt to "improve" a shot, the camera often automatically creates a yellowish or blueish tint.

We once tested the app on a selfie taken at sunset (during the so-called "golden hour"). The algorithm faithfully read the pixels and returned the result: "Pure Spring." The problem was that in reality, the woman had a contrasting "Cold Summer." The setting sun bathed the frame in a warm yellow light, completely distorting her physical features. The same thing happens with makeup, heavy foundation, or when taking photos inside a car with tinted windows.

Expert's Guide: How to Take a Photo That AI Will Read Perfectly

Over the years of working with digital tools, I've developed a strict protocol for photographic preparation. If you follow these four steps, the neural network will determine your color type with the precision of a laboratory spectrophotometer.

- Light: only diffused daylight. Avoid direct sunlight, ring lights, or incandescent bulbs. Stand facing a window during daylight hours (ideally, if it's lightly cloudy).

- Neutral zone of reflexes. Remove all color from your face. Wear a gray, white, or black T-shirt that reveals your collarbone. A bright sweater (especially neon or red) will cast a colored glow on your chin, causing the algorithm to assume your skin has a warm/reddish undertone.

- Blank canvas. Absolutely no makeup. Hair should be pulled back into a sleek ponytail. If your hair is dyed, wear a white or gray headband, leaving only your natural roots at the hairline visible.

- Stylist's life hack: calibration sheet. Take a plain white A4 sheet of paper and hold it in the frame at chest level. This will force the AWB algorithms in your smartphone camera to set the correct white balance, and your skin tone will be captured as accurately as possible.

An Eco-Friendly Approach: How Knowing Your Color Type Saves the Planet (and Your Wallet)

The shift from intuitive to mathematically calibrated shopping isn't just a matter of aesthetics. It's a fundamental step toward sustainable fashion. According to a McKinsey report (2024), color mismatches between what's onscreen and what's in person, as well as items that "just don't suit you," account for up to 40% of online returns. A significant portion of these returns end up in landfills due to complex sorting logistics.

When you know your exact HEX codes, you stop buying throwaway items. By uploading your palette to the app MioLook , you can automatically filter “your” and “foreign” shades when planning a capsule.

Ready to get started?

Try the MioLook plan for free—no commitments required. Start building your smart capsule today.

Start for freeI always tell my clients: 15 pieces in the right color scheme that complements your appearance will give you three times more combinations than 50 disparate trendy items. Consider that a basic cashmere sweater costs an average of €120–€150. If you choose the wrong undertone, it will sit in your closet like dead weight, a reminder of the money you wasted. Knowing your palette precisely is the best investment you can make in your wardrobe.

Machines don't rob us of our creativity; they free us from costly mistakes. By delegating color analysis to impartial pixels, we gain a foundation upon which to build a truly conscious and high-quality personal style. And intuition is best reserved for choosing perfume or fabric texture.